Missing Bullet Holes : In 1943, during WW2, the US military was trying to determine the optimum amount and position of armour on fighter planes and bombers. They knew that covering an entire plane with armour would make it heavier, slower, less manoeuvrable and, therefore more likely to get shot down. On the other hand, they also knew that fitting too little armour would make a plane susceptible to stray bullets.

The Challenge

“Too much armour is bad and so is too little.”

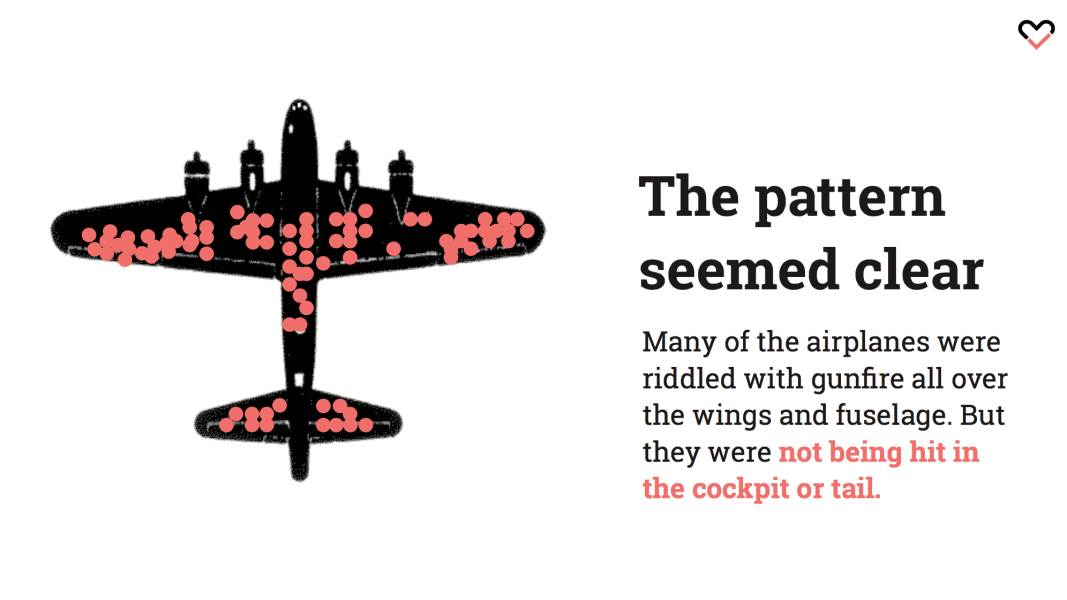

When trying to find the answer, the military began to study the placement of bullet holes on the planes that returned to base with the bullet holes. The Allies found the areas that were most commonly hit by enemy fire.

After studying bullet-hole patterns in aircrafts that returned from missions, the idea was to add more armor to the areas of the planes where they had been hit the most—wings, fuel system, and fuselage— but, oddly enough, not on the engines, which had the smallest number of bullet holes per square meter. They sought to strengthen the most commonly damaged parts of the planes to reduce the number that were shot down.

The Missing Planes

A mathematician, Abraham Wald, pointed out that perhaps there was another way to look at the data. Perhaps the reason certain areas of the planes weren’t covered in bullet holes was that planes that were shot in those areas did not return. This insight led to the armor being re-enforced on the parts of plane where there were no bullet holes. They were on the missing planes, the ones that had been shot down. So the vulnerable place wasn’t where all the bullet holes were on the returning planes. It was where the bullet holes were on the planes that didn’t return.

What is Survivorship Bias?

Restricting your measurements to a final sample, excluding part of the sample that didn’t survive, creates what statisticians call “Survivor Bias.” It can cause you to come to conclusions that are entirely wrong. Survivorship Bias is a selection bias that focuses on the survivors in evaluating an event or outcome

Overall it is an anecdote from World War II which tells us a lot about why final data could be wrong. The story behind the data is arguably more important than the data itself. Or more precisely, the reason behind why we are missing certain pieces of data may be more meaningful than the data we have.